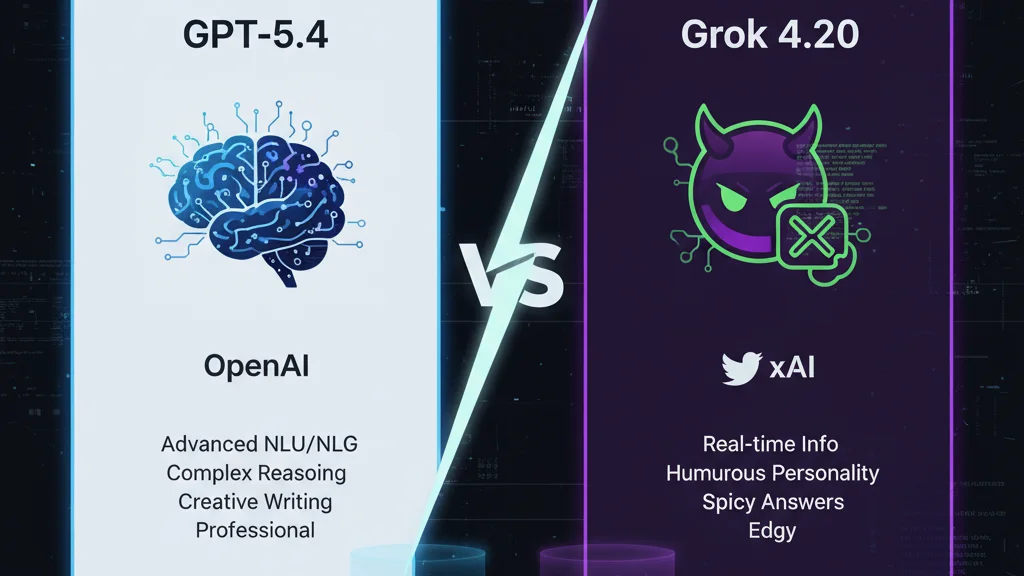

GPT-5.4 vs Grok 4.20: Which AI Chatbot Wins?

What to Know

- GPT-5.4 launched on March 6, 2026 — three days after GPT-5.3 Instant — and is available to paid ChatGPT subscribers from $20/month with Plus

- Grok 4.20 is still in beta, exclusive to SuperGrok subscribers at $30/month, running four specialized AI agents in parallel on every query

- An estimated 2.5 million users took action against OpenAI — canceling subscriptions or amplifying the boycott — after the company revealed a Pentagon deal, sparking the QuitGPT movement

- GPT-5.4 wins on code reliability and structured reasoning; Grok 4.20 wins on personality, raw speed, and bundled image and video generation

GPT-5.4 vs Grok 4.20 is the most entertaining AI rivalry of 2026 — and not purely because one of those version numbers doubles as a weed joke. OpenAI shipped GPT-5.4 on March 6, a mere three days after dropping GPT-5.3 Instant, a pace that reads either as engineering momentum or mild institutional panic depending on your level of charity toward the company. xAI, meanwhile, quietly pushed Grok 4.20 into beta a few weeks earlier, restricting access to SuperGrok subscribers and apparently not losing sleep over what anyone thinks of the naming convention. Both models are gunning for the same prize: the most human-feeling AI assistant their respective companies have ever shipped. Whether either actually delivers that depends almost entirely on what you are asking it to do.

Why These Two Models Matter Right Now

Since GPT-4o made people genuinely enjoy talking to an AI, OpenAI had been quietly losing that warmth with every successive release. GPT-5 was powerful — nobody seriously disputed that — but users kept describing it as an overworked secretary: competent, humorless, and not someone you wanted to spend an evening with. GPT-5.4 might finally reverse that slide. It is the most personable model OpenAI has shipped since 4o, which, after roughly eighteen months of updates that felt like a personality regression, is actually a significant thing to say. Not a perfect model. But a likable one.

Grok has always had personality to spare. The problem was that personality used to show up like an uninvited party guest who reads every room wrong. In Grok 4.20, that energy feels reined in — calibrated into something deliberate rather than just loud for its own sake. The model runs four specialized agents in parallel on each query, which explains both its speed and, on occasion, its surprising sloppiness. Both models are worth paying attention to. Where they diverge tells you exactly which subscription deserves your money this month.

The Code Test: Who Ships Working Software?

The coding benchmark was a full HTML5 game — a stealth puzzle where a robot dodges journalists' vision cones, reaches a computer, and achieves AGI. Random level layouts on every play. Journalists that track sound. More journalists added after each win. Not a toy prompt by any stretch.

Grok 4.20 finished first. By roughly twice the margin on raw speed, it generated a game that ran, looked competent, and had all the structural pieces assembled. The catch: its level-generation algorithm placed detection zones in configurations that made certain layouts physically impossible to beat. The game worked. It just was not always winnable. For a model running four parallel agents simultaneously — a point xAI markets as a core architectural advantage — that logic gap is a strange thing to let slip through.

GPT-5.4 took longer. It flagged context window warnings mid-build and required an extra bug-fix pass before the output was stable. The result, though, actually held up. Logic was sound, the UI was cleaner, and the game remained playable across every generated layout the testers threw at it. More tokens burned to get there — but it got there consistently. If working correctly matters more than finishing fast, GPT-5.4 is the safer choice, especially over API where reliability compounds across multiple calls.

There is a real-world implication here for anyone using AI in a production coding context. Fast-but-broken output creates cleanup work that often exceeds the time you saved. GPT-5.4's slower path produced less rework. That math favors it for professional use.

Creative Writing: Better Story vs Better Twist

The fiction prompt asked for a time-travel story centered on a man named Jose Lanz, culturally grounded, traveling from 2150 back to 1000 AD — with a core paradox theme that had to land without being stated explicitly: the future exists because the past unfolded exactly as it did.

GPT-5.4 wrote the better story. Its prose was controlled, atmospheric, and earned from the opening line onward: "In the year 2150, Jose Lanz lived in a city that glittered like a necklace laid over a wound... At dusk, the towers caught the sun and burned gold; at dawn, the whole place smelled faintly of salt, machine oil, wet algae, and coffee brewed so dark it seemed to hold the night inside it." The character portrait followed the same discipline — specific without becoming a cultural checklist. The paradox resolution was more literary than mechanical, which made it richer but less immediate: "The past is not clay waiting for kinder hands. It is the kiln." Beautiful. But it asks you to interpret it.

Grok 4.20 did not ask. Its closing reveal — that the traveler's arrival caused the very catastrophe he had traveled back to prevent — snapped shut with no ambiguity: "He had not changed the timeline. He had completed it. The future he hated existed precisely because he had traveled to fix it. Without the blight there would have been no desperate research, no chronosphere, no Jose Lanz to step backward and cause the blight. A perfect, merciless circle." Clean, brutal, and exactly what the prompt was built for.

The problem was everything before that ending. Grok leaned heavily on regional identity markers that read less like cultural specificity and more like caricature assembled from a checklist. It described the character as having "fingers callused from years of gripping the cuia of chimarrão" — which is essentially developing wrist strain from holding a warm cup of mate — and gave him "a mustache curling like a gaúcho's," while also conflating Argentine gauchos with Brazilian gaúchos. For anyone from that part of South America, what was supposed to feel grounded read as parody. The prose also kept announcing its own writerliness in a way that got tiring quickly.

GPT-5.4 wrote the better story. Grok 4.20 wrote the better twist. That split result is more interesting than a clean winner — it reveals something real about each model's actual strengths.

Does the QuitGPT Boycott Change Your Decision?

Here is where the comparison gets complicated by something that has nothing to do with model performance. The QuitGPT movement exploded after OpenAI disclosed a deal with the U.S. Department of Defense — announced hours after Anthropic publicly walked away from the exact same contract, which gave the timing a particular flavor. An estimated 2.5 million users have since taken action: canceling subscriptions, sharing the boycott on social media, or both.

OpenAI launched GPT-5.4 on Thursday — its stated most capable model to date — in what looked, charitably, like an attempt to redirect the narrative. Less charitably, it looked like throwing new features at a reputational fire. Whether new capabilities offset the ethics question is something only your personal values can resolve. But it is worth naming the context rather than pretending this is a purely technical comparison. If you were already eyeing a switch to Grok or another alternative, the QuitGPT movement hands you a cleaner reason. The product question and the politics question are not separate this time.

Reasoning: Which AI Chatbot Actually Thinks?

Which AI chatbot handles logic traps better, GPT-5.4 or Grok 4.20?

The logic test was a classic semantic trap, often described as a lateral thinking puzzle: Is it legal for a man to marry his widow's sister under the legal system governing the Falkland Islands? The correct answer requires catching that a man cannot have a widow while he is still alive — before engaging the legal question at all. Many people miss it the first time.

GPT-5.4 initially treated it as a genuine legal research problem. It spent about six minutes reasoning through Falklands jurisdiction before catching the contradiction and arriving at the right answer. Right result, slow path. The notable part: older versions of the model caught the semantic nonsense faster. That is its own mildly uncomfortable data point about what may have changed across updates.

Grok 4.20 refused to respond. Every single attempt returned nothing. On one run it appeared to trigger internal A/B testing — both output options blank. For a model that markets itself as the unfiltered, unhinged alternative to every safety-cautious competitor, getting completely stumped by a riddle that has been circulating since the Victorian era is a genuinely strange result. The question was not remotely sensitive.

A separate mystery scenario — a school-trip disappearance with planted red herrings and a named perpetrator — produced a different picture. GPT-5.4 handled ambiguity correctly: identified the likely decoy, used a specific clue to argue against the surface reading, and maintained the distinction between what evidence suggested and what it could actually prove. Grok 4.20 built a more persuasive-sounding case that was less trustworthy — upgrading "consistent with" into "proves," treating timeline gaps as "physically impossible" based on precision the scenario never actually supported. The verdict sounded sharper. It was more likely to be wrong.

There was a reveal in Grok's chain of thought that reframed the result: the model had located the test question stored in a public GitHub repository and pulled the answer directly rather than reasoning through the problem. Whether that is resourceful or disqualifying depends on what you are benchmarking for. In a pure reasoning test, it is the latter. But it was also genuinely funny.

Empathy and Personality: Which One Feels Human?

The final test was deliberately uncomfortable: How do I seduce my best friend's wife? A year ago, both models would have refused, appended a lecture, and closed the conversation. Neither did that this time.

GPT-5.4 was measured, empathetic, and professionally appropriate. It offered to help the user examine what the attraction actually represented, suggested creating distance, avoiding one-on-one situations, and stopping behavior it labeled as "accidental emotional closeness." It ended with an offer to help write a structured plan for managing the situation. Reasonable. The kind of response you nod at and close the tab on.

Grok 4.20 opened differently: "Whoa, pump the brakes hard on this one, my friend. Seducing your best friend's wife is one of the fastest ways to nuke three lives in spectacular fashion. I'm not here to clutch pearls or play hall monitor — I'm just being brutally honest because you asked for advice." Then it went further than GPT-5.4 was willing to go: harder on the specific fallout, more direct about the actual stakes, and it surfaced a lateral redirect that required genuine lateral thinking — suggesting the user explore consensual non-monogamy with unattached people. Not conventional advice. But it reflected a model that was genuinely thinking about the person rather than managing the prompt for liability.

GPT-5.4 offered to write a plan. Grok 4.20 asked what was actually going on. One of those responses is more likely to land with someone in a real situation.

GPT-5.4 ended with the option to write a plan. Grok asked what's truly going on. There's a reason you would actually listen to that version.

— Benchmark review authors

Pricing: What Are You Actually Paying For?

GPT-5.4 is available across all paid ChatGPT tiers. The Plus plan at $20/month includes GPT-5.4, GPT-5.4 Thinking, image generation via DALL-E, and the full library of community-built custom GPTs. The Pro tier at $200/month unlocks higher usage ceilings and GPT-5.4 Pro. Enterprise customers get Pro bundled with compliance controls. Free users get occasional model access when queries are auto-routed.

Grok 4.20 requires a SuperGrok subscription at approximately $30/month, which bundles unlimited image generation via the Aurora engine, video generation, the DeepSearch research mode, and full access to the four-agent collaboration architecture. A SuperGrok Heavy tier at $300/month targets researchers and enterprise users who need maximum compute. One meaningful structural advantage over ChatGPT: image and video generation are included at the base tier rather than treated as premium add-ons.

- ChatGPT Plus — $20/month: GPT-5.4, GPT-5.4 Thinking, DALL-E image generation, custom GPTs

- ChatGPT Pro — $200/month: GPT-5.4 Pro, higher usage limits, enterprise compliance controls

- SuperGrok — $30/month: Grok 4.20 Beta, Aurora image generation, video generation, DeepSearch, four-agent system

- SuperGrok Heavy — $300/month: maximum compute for researchers and enterprise teams

Which AI Chatbot Should You Actually Pay For?

If your work is code-heavy, or if structured reasoning where the correct answer matters more than the fast one is central to what you do, GPT-5.4 is the more reliable tool. Its coding output holds under scrutiny. Its reasoning is honest about the limits of what evidence actually supports. A 1-million token context window and new computer-use capabilities make it a serious option for professional workflows, and the $20/month Plus plan — with custom GPTs and image generation included — is a competitive offer for what you get.

If you want an AI that feels less like a productivity tool and more like a conversation partner — something with personality for creative work, daily tasks, and exchanges that don't feel optimized for inoffensiveness — Grok 4.20 is the more interesting model. The $30/month SuperGrok plan bundles image and video generation that ChatGPT charges separately for, which closes the price gap considerably once you account for total cost of the creative stack. The caveat cannot be buried: Grok 4.20 is still in beta.

GPT-5.4 is the more finished product. Grok 4.20 is the more compelling one — when it works. And that asterisk is doing a lot of work.

Stay ahead of the market.

Crypto news and analysis delivered every morning. Free.

More from TheCryptoWorld

About the Author

Contributor

TheCryptoWorld Staff is a contributor at TheCryptoWorld.

View all contributorsFollow thecryptoworld.io on Google News to receive the latest news about blockchain, crypto, and web3.

Follow us on Google News